Release notes

The following release notes cover the most recent modifications or additions to Intrinsic software. You can find information on new features, breaking changes, bug fixes and deprecations for the Intrinsic platform and Flowstate.

Version 1.29 (March 30th, 2026)

For the specific VM versions in this release:

- Intrinsic runtime version: 0.20260316.0-RC13

- IntrinsicOS version: 20260226.RC02

Infrastructure

- HMI provides the option to reboot and shutdown the IPC. Reboot is needed so in case of an issue the IPC can be power-cycled to restore the cell. Shutdown will be used by ECs to power-down the cell if no production is planned to save energy.

- Retain asset text logs to disk, making them eligible for inclusion in recordings

- Cloud Zenoh routers are enabled in multi-tenant projects

- Adds the ability to specify consistency for kvstore writes via RPC. Default remains high.

- Support custom durations in create_recording skill.

- Renamed all default vmpools from

vmpool-defaultconftodefaultand fromvmpool-defaultconf-gputodefault-gputo have a more concise and less boilerplate naming pattern. Function is unchanged and the rollout of this change should be without downtime. Marked as breaking because possible scripting could exist e.g.inctl vm lease --pool vmpool-defaultconf-gpuneeds to be changed toinctl vm lease --pool default-gpu. Adoption rate is assumed to be low yet, so the probability of breakage of util scripts is very low.

- The customer was setting values in the KVStore with high consistency to the same key from multiple threads or processes. In that case, the writes will race and the losing writes timed out. This fix will allow the customer to receive the proper

abortevent.

Reduces log noise from Python skills

Perception

- Upgrade and do all necessary refactoring for V1.0 camera protos

- Stream camera images with a certain frame rate from cameras in the workcells

- The displayed camera view frustum now corresponds to the one set in the camera sdf file and hence in simulation renders exactly what is inside the visualized view frustum. Previously the visualized view frustum did not correspond to the frustum used for rendering.

- intrinsic_proto.perception.v1alpha1.SymmetryService provides methods to detect symmetries of 3d meshes

- Cameras will automatically try to clear faults and reset themselves after e.g. control was lost due to a short network or power outage.

- Updated the scan barcodes python example to use the v1 camera client.

- Updated the perception python API to v1

- When deserializing image buffers in python the pixel type is now attached to the numpy dtype metadata

- Previously cameras with very similar IDs, like for two Baslers (Basler-a2A3840-13gmPRO-40537040 and Basler-a2A3840-13gmPRO-40537041) the last digits were cropped when searching for the stream, so it always found the first one and displayed the stream of the first one for both cameras.

Process Development

-

Processes created from the Flowstate UI are now assets by default.This allows them to benefit from common Asset tooling and functionality, such as sharing and installation management. Existing (legacy) Processes can be upgraded to assets easily. This can be done per-Process by selecting a Process and using the upgrade button in its side panel. To upgrade all legacy processes in a solution, select ""Process"" > ""Upgrade..."" from the menu bar. Creation of legacy (non-Asset) Processes is still available for a limited time to enable transitioning existing workflows without disruption. This is deprecated and should only be used when absolutely necessary.

Important [Breaking]:

This change is non-breaking in the graphical UI and it also does not change the execution behavior. However, for Processes which have been migrated to Assets, installation and retrieval through APIs is now done with the InstalledAssetService instead of the SolutionService. See documentation for further details.

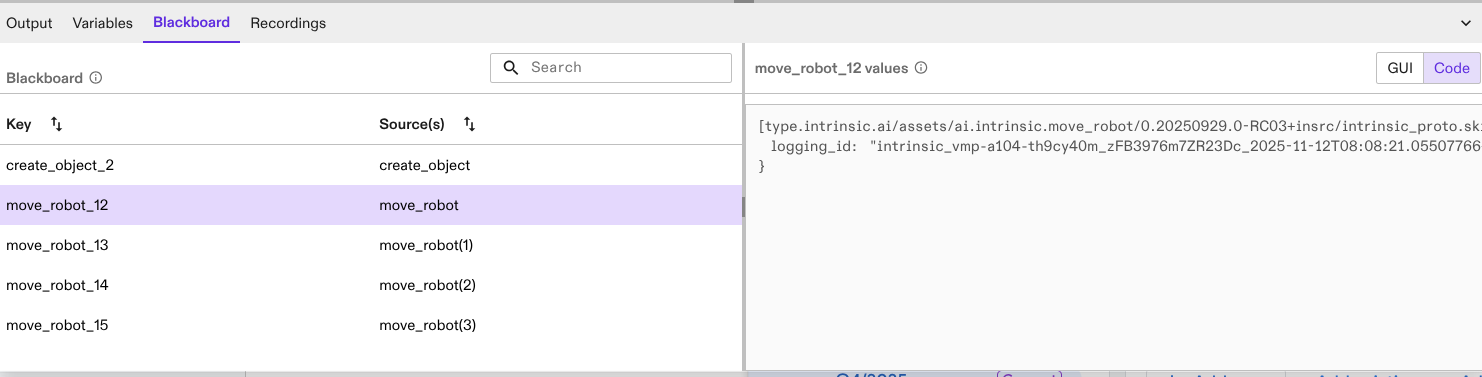

- Methods for inspecting the blackboard are available in the solution building library under

executive.operation.blackboard.

- Processes can be loaded into the executive by providing the asset id of an installed Process asset instead of providing the full behavior tree proto.

- In the solution building library,

BehaviorTree.find_tree_and_node_id()now returns aNodeIdentifierobject instead of a tuple. The function now fails when a node was found, which does not have an identifier.

- Specifying

type_urlonGetNamedFileDescriptorSetin the proto registry may no longer return the full descriptor set of the asset referenced in the type URL. Useasset_idwith the ID from the type URL if you require the old behavior.

- Behavior trees with conditions edited in the frontend can be loaded in the SBL without loosing the conditons.

-

Fix an issue where cancelling a skill is not registered correctly when the cancellation happens at exactly the same time that the skill is started.

-

This also fixes a rare situation where Process execution can get stuck in the cancelling state when the cancellation request happens at exactly the same time that the skill is started.

- When copying a skill such as

run_python_script (get -dim_z/2)with an input variablezand refactoring the name and logic toyit still requires thezvariable as input to successfully execute. Without the unusedzvariableget -dim_y/2does not work.

Robotics

- User interface available where in simulation the user can set digital and analog Inputs to true/false or to a specific value (float)

- annotate grasp works deterministically with the same object for all runs

- Placement constraints can be added to the parts

- Placement position is defined automatically based on the object information and shapes

- Allow user to use meta data from gripper to avoid collision between object and gripper

- Allow parallel execution of ADIO read and set skills.

SDK & Development Environment

- The

simulationargument for direct calls ofsolutions.Executionin the SBL has been removed. Usingsolutions.Executiondirectly is discouraged. Users should migrate to useSolution.executiveinstead (recommended) or update their calls tosolutions.Executionto remove thesimulationargument (not recommended). Thesolutions.simulationmodule is deprecated and will be removed in future.

- APIs for previously supported “Products” concepts have been deleted from the SDK, including the Solution Building Library. Users should use SceneObject Assets instead.

- Corrected a display error in the VS Code extension where installed services appeared as JSON strings; they are now presented in a clear, human-readable format.

Workcell Design & Simulation

- Users can change the name of the geometries in the object instance editor.

- Product APIs are deleted from the SDK, including Solution Building Library. Users should use SceneObject assets instead.

- Spawned objects in sim have the same name as in the belief world. Requires update to

CreateObjectskill andSpawnerservice.

- Objects with primitive shapes can be trained using pose estimators.

- Fixes an issue where a solution fails to deploy in sim due to the simulator failing to start up for the initial scene. When deploying a solution in sim, Gazebo is currently initialized before the declared ports in

gzserverandsimulation-serviceare ready. So solution deployment is blocked in sim on Gazebo being ready. This can add a deployment time cost of several seconds while Gazebo starts up. In the worst case, the solution deployment can be blocked for several minutes and appear to be stuck. This change unblocks solutions to be deployed in simulation without waiting for Gazebo to be ready. Users can make changes to the Initial scene without waiting for Gazebo to be deployed.

- This fixes an issue where the memory consumption of Gzserver increased heavily when a camera has been added. Memory use is now stable.

- The

Simulation::ResetAPI is deprecated in the Solution Building Library. Instead, please set the optionalstart_from_world_stateparameter when callingexecutive.run()/executive.run_async()/executive.start()to specify what world state the process/behavior tree should be started from. Seesdk-examples/notebooks/002b_executive_and_processes.ipynbfor example usage of thestart_from_world_stateparameter.

Customer Support

- Poses would unexpectedly change while editing or moving the robot in the execute tab

- In the frontend, Select the robot to jog it. The selected jogging base frame is on "world", but when trying to move "up", it moves down instead.

- The executive got stuck in the cancelling state. It seemed that the reason was preplan-motion.

Version 1.28 (February 23, 2026)

For the specific VM versions in this release:

- Intrinsic runtime version: 0.20260209.0-RC04

- IntrinsicOS version: 20260128.RC01

Perception

- Support for the Zivid 3 has been added, and all Zivid camera models now feature near and far plane parameters to define their optimal working volume.

- To provide more precise 3D modeling and improved field-of-view estimations, the Photoneo hardware resource has been modified to model-specific assets.

Infrastructure

- Get information about a single pool using the new inctl vm pool describe command

inctl vm pool describe --pool {pool_name} --org {your_org}@{project_name}

- Removes the previous limit on the number of log items per page in the

inctl logs cpcommand to improve the retrieval speed of structured logs.

Fixed a bug in the text log API that had caused some logs to be truncated.

- Fixed an issue with the sorting functionality in the Text log viewer API.

- The create_recording skill automates the creation of solution recordings, capturing structured logs from a defined timespan.

Process Development

- Extended status error messages will now be accompanied by AI generated summaries, providing a natural language explanation of the encountered error.

SDK & Development Environment

- Project name is now being set to the user config file by the Intrinsic Extension

This fixes issues in SBL where

deployments.connect_to_selected_solution()was failing due to missing project information.

- Corrected a display error in the VS Code extension where installed services appeared as JSON strings; they are now presented in a clear, human-readable format.

- SDK examples now contain scripts illustrating the use of solution recordings, which capture structured logs from a defined timespan. See README

Workcell Design and Simulation

Create Productimport UI has been removed. Users should use 'Create SceneObject(s)' assets workflow to import their 3D models instead.

- Products are no longer shown in the Installed Assets panel.

- Products are no longer supported and thus the product service is obsolete.

Users should use

SceneObjectassets instead. If your solution still uses the product service, please uninstall it through the SolutionEditor.

product_reader dep from estimate_sheet_parts skill

estimate_sheet_partsskill only works withSceneObjectsassets. Support for Product has been dropped.

- Adds an interactive kinematic control panel to the SceneObject import workflow, enabling users to validate joint movements and limits through real-time jogging and slider manipulation. The user can test and validate an object's kinematics directly without needing to install/ author services or a separate simulation setup.

world_tree to use signals and ChangeDetection.OnPush

- Fixed performance issue in the world tree UI that was causing significant UI slowdowns for scenes with many objects (100+)

- The Motion Planning Inspector automatically highlights 3D objects which dominate the computation time during collision checking. Additionally, toast notifications will alert user to the presence of these objects when detected and provide resources to help optimize performance.

ICON

- The default behavior for enforcing Cartesian acceleration constraints has been updated to limit the overall (2-norm) Cartesian acceleration of the end effector. This change better aligns with the common use case of limiting total motion speed, and may result in lower maximum acceleration or deceleration than in previous versions.

Version 1.27 (February 5th, 2026)

For the specific VM versions in this release:

- Intrinsic runtime version: 0.20260126.0-RC07

- IntrinsicOS version: 20251126.RC01

Account & Administration

- The Flowstate portal now offers the ability to make branches of a Solution

A set of connected Solution branches form a Solution tree. Solution trees allow

you to:

- Experiment on a copy of a Solution version in a child branch, without cluttering your main branch’s version history

- Copy a Solution version from one branch to another

- Move branches within, into, or out of a tree, so that you can migrate from using standalone Solutions to using Solution trees

- Group deployments as child branches of your “template” Solution, with each workcell having its own version history

Note that this feature does not include diff/merge. Learn more.

Control & Motion

- All trajectories are now validated with a safety check prior to execution. This reduces the risk of collisions, with a small increase in motion planning time.

- Grasp planning now can optionally account for placement constraints upfront, ensuring grasps are selected to best support successful object placement.

- You can now define a minimal contact region on the gripper fingers that must be covered by the object surface, enabling more robust and reliable grasps.

Infrastructure

- The onprem

kvstore_serviceis now accessible externally through Ingress. Applications running externally can use this to set, update, and delete keys for a particular workcell.

- KVStore client library provides a simple client library as part of the SBL to interact with a VM or workcell’s KVStore.

- Visualization_msgs/MarkerArray messages that are captured in Intrinsic recordings are now supported and will be shown as part of visualizations of those recordings.

- You can now restore your IPC network configuration from automatic created backups that are confidential and securely stored in the Intrinsic Cloud. This enables you to get your system connected faster in the case of a misconfiguration or to set up new systems with your default config. All relevant information can be found in our documentation.

- Self-service pool management now supports zero-downtime changes to runtime and intrinsicOS versions for VMPools. Documentation is available under BUILD → Get started → Build with code → CI/CD workflow guide.

- Improved exception handling in Intrinsic Pubsub python libraries.

Process Development

- Loop and retry node counters are now in sync with their configured

loop_counter_blackboard_key and retry_counter_blackboard_keywhile a process is running. Any updates to these keys, such as via the blackboard service, immediately affect the active node state. If you manually configure counter keys, each LoopNode and RetryNode running in parallel must use a unique key, as shared keys will now cause state conflicts and unintended execution flow. Flowstate automatically generates unique keys, so no changes are required when using the defaults.

- To assist with debugging, you can now inspect the output from Python script nodes—including “print()” statements—directly within the sequence list after a process has been executed.

- The executive service's CreateBreakpoint API has been updated: attempting to establish a breakpoint where one already exists now triggers an "already exists" failure immediately. This replaces the previous behavior where the call would initially succeed but fail later during runtime.

- Fixed an issue where an empty page or partial results may appear when listing assets in the catalog.

SDK & Development Environment

- Asset-specific install and release commands (e.g.

inctl skill install) that were deprecated back in October 2025 have now been removed. Use the genericinctl asset …commands instead.

SubscribeToSignal added to GPIOClient

- A new

SubscribeToSignalfunction has been added toGPIOClientto simplify subscription to signals.

Workcell Design & Simulation

UpdateUserData field

UpdateObjectPropertiesrpc of ObjectWorldService has been updated so you can now modify WorldObject's data viaUpdateUserDatafield.

- Adds support for mesh simplification and isotropic remeshing during scene object import and instance editing. These operations help optimize meshes to improve performance for tasks such as path planning and simulation.

- The start process dialog will now report which objects will change when resetting the belief world from initial.

- You can now transform visual geometries for a link entity from the instance editor, similar to colliders.

Version 1.26 (Dec, 15th, 2025)

For the specific VM versions in this release:

- Intrinsic runtime version: 0.20251208.0-RC02

- IntrinsicOS version: 20251126.RC01

Control & Motion

plan_grasp and grasp_object have been removed

- Please use their equivalent replacements:

gripper_object/collision_excluded_eoat->collision_excluded_eoat_parts,pose_estimates.category->pose_estimates.object_category,grasp_bbox_zone->grasp_zone,product_part_name->grasp_zone.object_category.

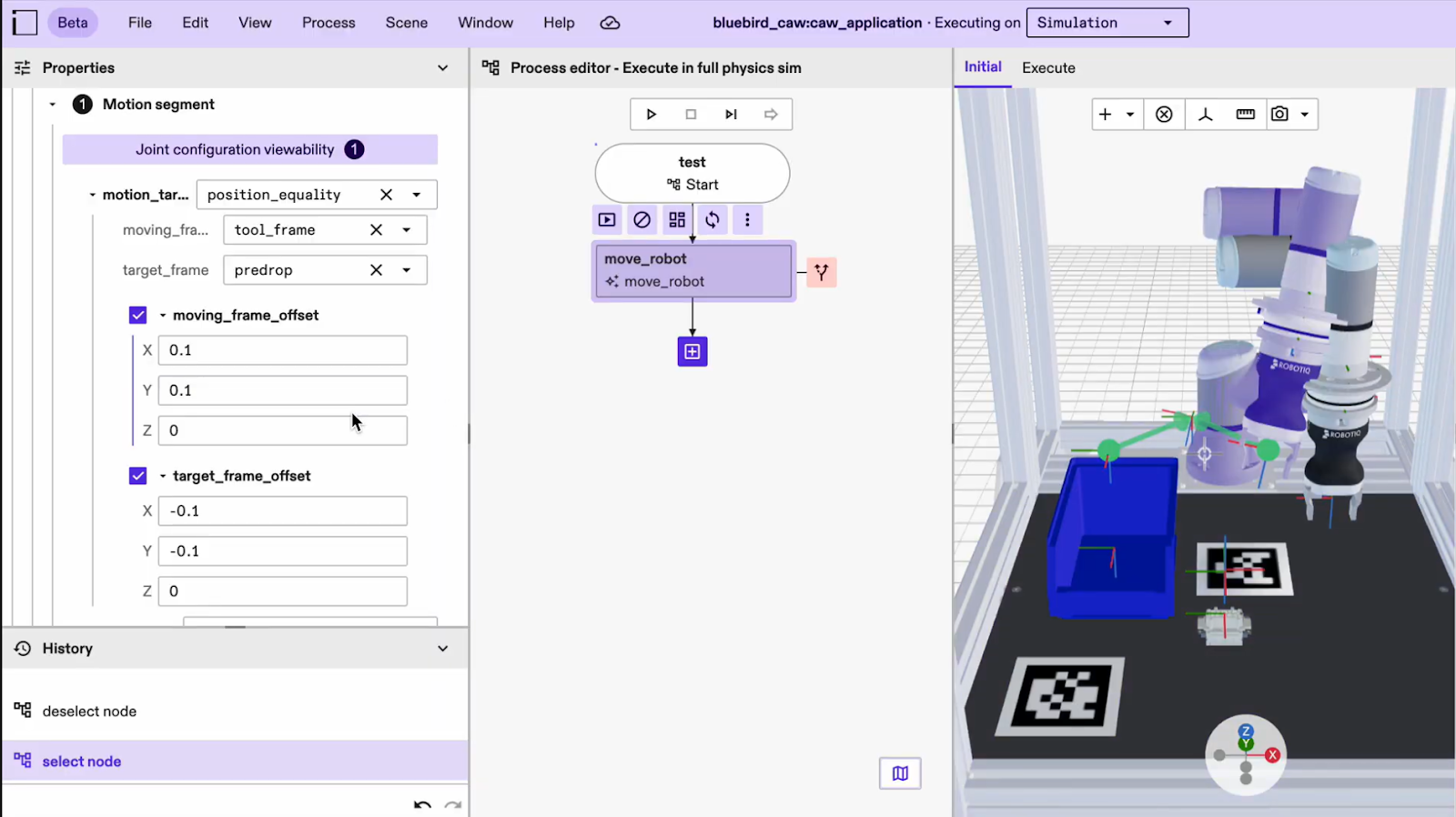

move_robot skill now supports visualization for both rotation cone and bounding box constraints.

- You now get a visualization preview that makes constraint configuration much clearer, particularly for rotation cones, which were previously difficult to interpret. This helps you see the effect of your adjustments and understand how the robot is likely to solve the motion.

move_robot skill now has a "shortcutting_combine_collinear_segments" parameter to shorten planning time

Using the "shortcutting_combine_collinear_segments" in the “Move robot” skill, you can now enable faster motion p

lanning for motions that are mostly straight lines in joint space, but at the expense of slightly longer

path lengths and trajectory durations. To use this functionality you may need to update your move_robot skill.

- This feature enables the use of analog inputs with FANUC robots by:

- Storing 16-bit integer values in FANUC digital inputs using a background logic program.

- Configuring the FANUC hardware module driver to convert these 16 digital input values back to analog values in Flowstate. Analog outputs function similarly. More information can be found in our FANUC stream motion documentation.

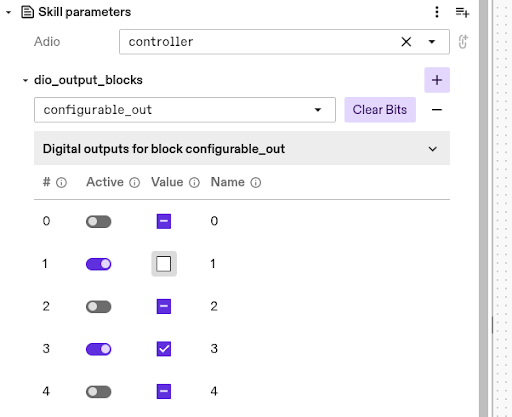

dio_set_output skill now has custom UI for selecting blocks and setting bits

-

Available output blocks and bits are read from the selected realtime control service. This allows selecting the output block from a dropdown, as well as selecting bits from the UI. Note: Adjusting the parameters is temporarily limited when the realtime control service is critically faulted.

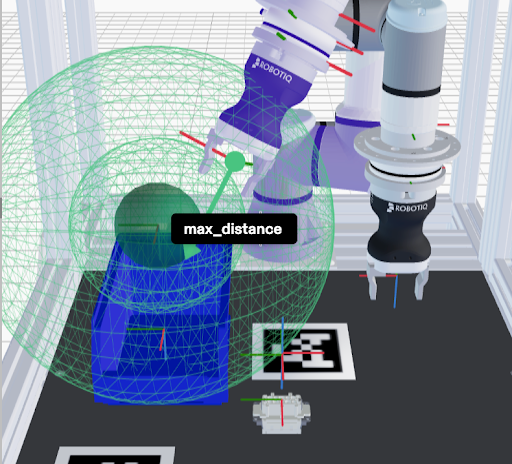

- You now have full visualization of the point-at constraint in the

move robotskill. This makes it easier to understand what the robot is doing as you adjust the point-at constraint parameters. It also helps you clearly see the relationship between the minimum, maximum, and threshold radiuses and how they relate to the moving axis.

- You can now visualize how each offset frame relates to its parent frame. Several motion segments and constraints

in the “Move robot” skill include frame offsets from either the moving frame or the target frame.

This improvement helps you more easily understand and manage skills that involve both.

It is especially helpful in situations where both a moving and a target frame are present,

which can become confusing without clear visualization.

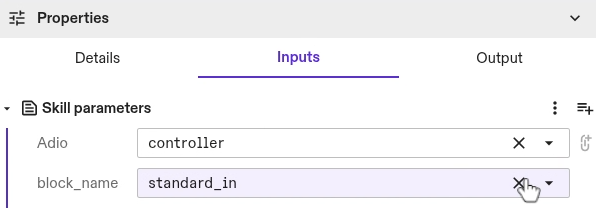

- The digital block to read can now be selected from a list, instead of manually looking up the correct string. String input is used as a fallback in case the lookup fails.

- If you previously attempted to jog a robot without application or system limits, the frontend would fail silently and behave in unexpected ways. Now, you will see a display error to alert you of the misconfiguration.

Documentation

- There is a known issue with the documentation site not loading properly in some cases. You will need to do a hard refresh (Ctrl+Shift+R) or (Cmd+Shift+R) on the browser to fix the issue.

Infrastructure

- You can now configure IntrinsicOS, so it shuts down gracefully in the case of a power outage using a UPS connected via USB or network. To configure a UPS, please refer to our UPS documentation.

- You can now restore your IPC configuration from an automatic created backup, that is created on every IPC configuration change. To restore, you can download the config file from the IPC manager and upload it using the local configuration interface on the IPC. Detailed instructions can be found in our documentation.

- You can now stream logs from services and hardware devices as well as skills. The previous skill-only dropdown has been replaced with a searchable asset-selection panel, letting you choose multiple installed assets using grouped checkboxes.

- With this launch you can now use recordings regardless of whether you are running your solution on an IPC or a VM. This enables you to access recordings as a debugging/troubleshooting mechanism earlier in the development cycle, without the need for any hardware commissioning. Please refer to our documentation for more information.

- The text logging viewer is redesigned and now has the ability to query logs for all assets (previously only allowed skills).

Perception

- The camera-to-robot calibration UI will no longer function until required updates are applied. To restore functionality, you must install the calibration_service from the asset catalog, add an instance of it to your solution, and update the collect_calibration_data skill to the latest version.

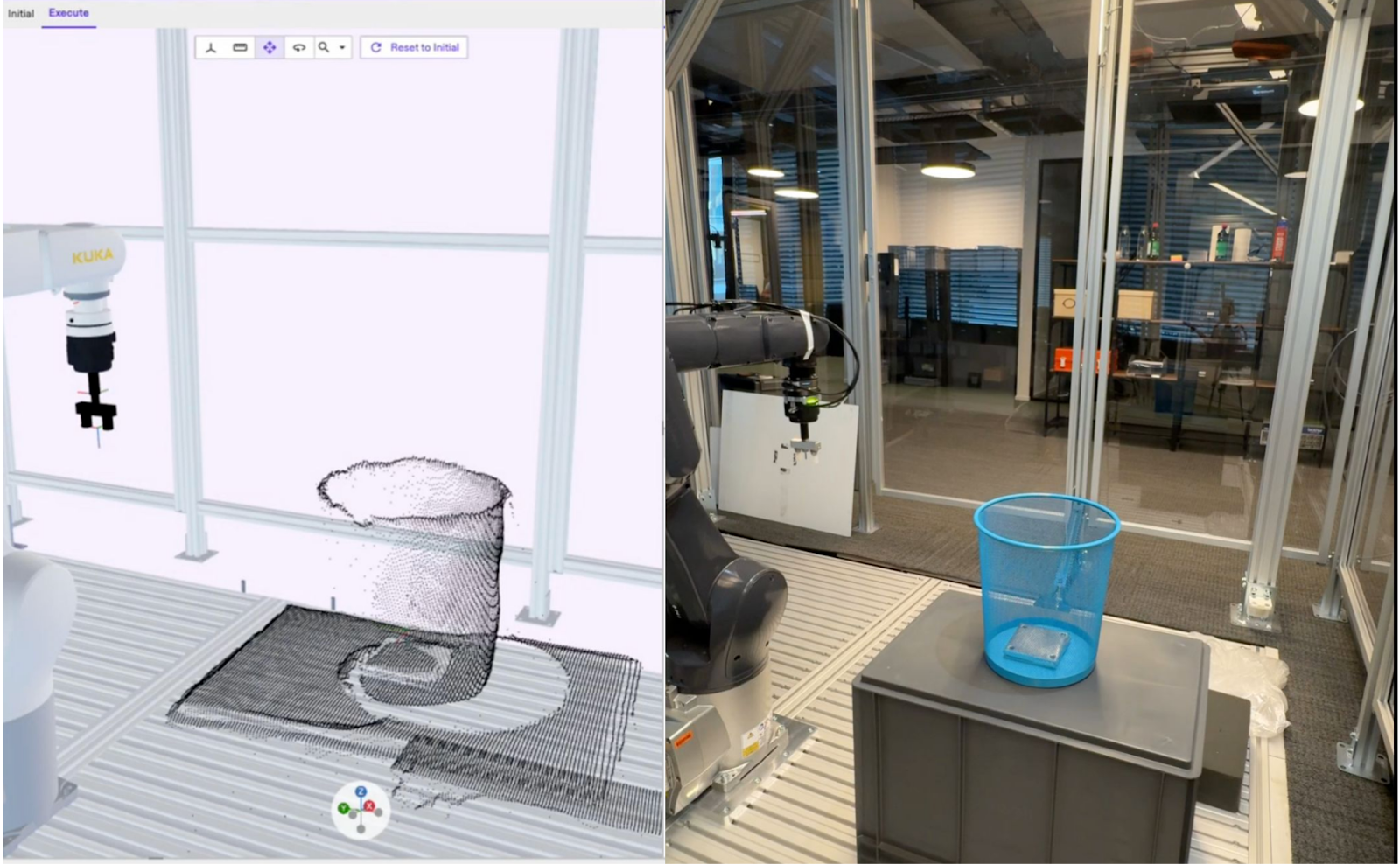

- This enables retrieval of the ROI via an HMI and the ability to inject the ROI into a digital twin for visualization.

- You can now use a powerful new service to compute a 3D reconstruction of a scene.

By providing a set of stereo pairs, which are two cameras capturing the same scene

from different viewpoints, you can generate an accurate 3D model of the environment.

This reconstruction can help you with tasks such as collision avoidance for unknown

objects or retrieving depth images for other downstream processes. You can access

this functionality directly through the estimate_point_cloud skill.

- Training jobs now display both progress percentage and ETA. You’ll also see your queue position, based on your user tier and GPU availability, along with the projected date when you can submit your next training run.

- Simulation now supports selecting depth, point sensor, or both image types in

Capturerequests for RGBD cameras. Previously, only the point sensor image was available when running in simulation

- You can now use a Manual inference mode for pose estimation. This update gives you full control over when the pose estimation is performed, helping you avoid long inference times caused by complex parameter changes. You can make all the desired parameter adjustments before running the calculation, ensuring faster and more efficient results.

Process Development

- The process variable panel now has a search field to filter which variables are shown. In addition to that, the actual values are now shown on the right side of the panel with the opportunity to show either the proto or a UI representation of it.

- A new panel has been added next to the process variables panel. This new panel shows the blackboard

content after executing a process. The blackboard contains keys with output data for each skill

or node that produces such output data during the run. This can help you inspect skill return values after a process run.

SDK & Dev Environment

- In the skill "dio_set_output", the deprecated parameters "block_name", "indices", and "values" are removed. Existing solutions will continue to work since they tag the skill version. If you use these skill parameters, edit your skill to use the repeated field "dio_output_blocks" instead.

- We are deprecating the field

geometriesinintrinsic_proto.world.GeometryComponent.GeometrySetin favor of a named_geometries field. In the process of migration, we still keep the deprecating geometries field around for clients that reads aGeometryComponentor creates aGeometryComponentfrom scratch. Clients are advised to migrate client code to use the newnamed_geometriesfield. Breaking: If the client code gets aGeometryComponentproto from the world and modifiesGeometryComponent.GeometrySet.geometriesin place, then use this modifiedGeometryComponentin a subsequent world mutation request, the modified geometries will not be read. The user is advised to modifyGeometryComponent.GeometrySet.named_geometriesinstead.

inctl asset release commands will be removed

- Individual inctl

<asset-type>release commands will be removed in the next platform release V1.27. Use inctl asset release instead.

- The previously deprecated inctl skill logs command has now been removed. Please use inctl logs –skill instead, which in addition allows streaming logs from multiple assets at the same time.

- Processes will now be handled as assets with their own asset type in solutions.To prepare this, the SBL

has been extended to handle assetized processes (create, save, delete). Process assets

can be accessed under solution.processes.

<>(previously solution.behavior_trees.<>). Usage is explained in more detail in the example notebook. All changes are backwards compatible, such that legacy processes are still supported

- Introducing SkillCanceller::Wait/StopWait. This update fixes a long-standing lifetime issue with skill cancellation. Previously, any callback you wrote had to capture references (often an icon::Session) that didn’t live long enough, outliving the skill but not the SkillCanceller. This made safe cancellation difficult or impossible. With this change, you no longer need to rely on callbacks inside skills. You use a slightly more explicit pattern, but in return you get a memory-safe and thread-safe cancellation mechanism that behaves reliably in all scenarios.

Any proto in the callback to python

- The python PubSub bindings now support subscription without the need to specify

which protobuf message type is expected. This function overload will provide the proto

Any.

- You no longer need to build a separate web server, backend, and frontend to create an HMI service. There is now a specialized service asset that handles the web-server component for you. The content to host on the web server is provided through a data asset, following the platform’s existing asset model. The static content service asset is available in the catalog, and its source code is published in the sdk-examples repository, along with instructions on building it from source and creating the required data asset. This gives you a flexible starting point that you can customize without having to start from scratch.

- To match the license change of the SDK to Apache-2.0, the SDK examples are now also licensed Apache-2.0. Using the Apache-2.0 license helps users navigate license concerns because it is well known, permissive, and OSI-approved.

- A new interface-based dependency system enables Assets to interact with gRPC services and proto data provided by other Assets, allowing Skills, Services, and HardwareDevices to seamlessly use interfaces exposed by Services, HardwareDevices, and Data Assets.

Workcell Design & Simulation

Product as a concept will be removed in the next major release. Use scene object assets instead

- Product-specific skills like

clear_product_objects,request_product,remove_productandsync_product_objectsskills are considered deprecated and are no longer maintained. Instead, please usecreate_objectandremove_objectskills. The corresponding functionality is now provided directly via scene object assets.

- You can now fully preview and validate objects and devices during the import process. Before creating an asset, you can:

- Preview the object or device.

- See mesh details, such as face count, to understand complexity.

- Preview the results of mesh simplification before applying them.

- Preview material properties and their effects.

- View the object's origin and all associated frames.

- Review the entity tree structure.

- Upload a new geometry file if the original selection was incorrect.

- Navigate, measure, and inspect the object just as you would in the main scene.

- These improvements give you immediate visual feedback, helping you avoid common issues such as selecting the wrong file, applying mesh cleanup without understanding the outcome, working with overly complex meshes, or discovering incorrect origin frames only after assetization. This early-stage visibility saves time, reduces errors, and makes the import process more reliable.

plan_grasp and grasp_object have been removed

- Please use their equivalent replacements:

gripper_object/collision_excluded_eoat->collision_excluded_eoat_partspose_estimates.category->pose_estimates.object_categorygrasp_bbox_zone->grasp_zoneproduct_part_name->grasp_zone.object_category

geometries in intrinsic_proto.world.GeometryComponent.GeometrySet will be removed in favor of a named_geometries field

- The deprecated

geometriesfield remains temporarily available for clients that read or construct aGeometryComponent, but you should begin migrating your client code to the newnamed_geometriesfield. Breaking change: If you client retrieve and aGeometryComponentfrom the world, modifiesGeometryComponent.GeometrySet.geometriesin place, and then uses that modified component in a subsequent request, those changes will no longer be applied; all updates must now be made throughnamed_geometriesinstead.

position_bounding_box field for outfeed service configuration will no longer be supported

- Please use

remove_objectskill instead to specify the bounds dynamically during process execution.

spawner service configuration fields will no longer be supported

- Please use

create_objectskill instead to specify the randomization arguments during process execution.

- All world calls are now optimized and can significantly improve various world-related operations, for example making motion planning faster. The level of improvement scales with the number of objects in the world, with scenes with more objects benefiting the most.

ImportSceneObject RPC response now includes visual and collision geometry summary statistics (e.g., bounding box, volume) for the imported object

- The summary statistics provide properties of the imported scene that help verify the quality of the geometries that compose the scene. Unexpected values in the summary statistics are an indicator that the uploaded scene may have degeneracies and/or requires repair.

CreateObject skill supports specifying the naming schema for the created objects.

- This improvement will provide you more control over how the objects are named during the process execution.

register_geometry_v1 to ObjectWorldClient python client

- As part of the migration of geometry protos to v1.geometry, a new method

register_geometry_v1has been added toObjectWorldClientpython client. Please use the newregister_geometry_v1as a replacement ofregister_geometry."

- You can use a keyboard shortcut (Shift+ArrowDown) to snap an object to the surface below it. For example, you can now conveniently snap objects to the floor of the enclosure.

- Fixed an issue where small pose changes (less than 1mm) in the scene editor were being ignored.

Reset to initial no longer fails due to ICON real-time control service configuration error

- Previously the action would fail with an error message saying

Failed to reset simulation. With this fix, the action succeeds, but the ICON real-time control service may continue to be in anErrorstate.

Version 1.25 (Oct 27th, 2025)

For the specific VM versions in this release:

- Intrinsic runtime version: intrinsic.platform.20251023.RC05

- IntrinsicOS version: 20250912.RC01

Control & Motion

- If you write your own skills or services using ICON clients, and are also using the

intrinsic_proto.icon.v1.Conditionproto message type, you will need to update the included dependencies to include theintrinsic/icon/proto/v1/condition_types.protofile and corresponding targets.

move_robot skill now supports motion events based on Cartesian arc-length and time based offset

- You can now define motion events for the

move_robotskill using Cartesian arc-length relative to the start and end of the trajectory. This allows for more precise control, enabling you to trigger I/O changes based on traversal of motion trajectories.

Infrastructure

- You can now use a new set of inctl commands for VM and VM pool management. These commands allow you to lease VMs with specific versions and configurations, manage VM lifecycles by extending, shortening, or returning them early, and create dedicated VM pools tailored to your team or project needs. For full command syntax please refer to the inctl documenation. New APIs are documented in the GRPC reference section.

Assets

- JSON output from

inctl asset list_released_versionsis now a list of descriptions rather than a dictionary containing an "asset" element as a list of descriptions. Please update any scripts to account for the change in data type.

- The deletion_strategy field and enum has been removed. Please remove any usages of the field in calls to AssetDeploymentService.DeleteResourceRequest. Previously built assets will continue to function normally.

Perception

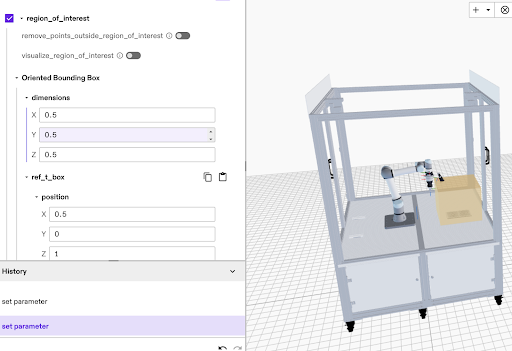

- To improve precision and reduce processing time, you can now define a specific region of interest for pose estimation which will restrict the visible working volume.

SDK & Dev Environment

- You can now use the command line for deploying solutions using

inctl solution start <solution-name>. Previously, starting solutions was only possible through Flowsate Portal. This new functionality is particularly beneficial for automated continuous integration (CI) workflows, as you can specify the solution to deploy using its solution name.

- The

inctl logscommand now supports multiple targets in a single command, allowing you to monitor log output from a single terminal. This can help you stream logs from different skills or services.

inctl logswill now serve as the main command to stream logs of skills and services. Theinctl skill logswill be deprecated and removed in an upcoming release.

Workcell Design & Simulation

- Some geometry proto fields have been renamed in intrinsic/geometry/proto/geometry_service.proto and intrinsic/geometry/proto/geometry_service_types.proto with a_v0 suffix in preparation for deprecating these fields and replacing their usage with v1 geometry protos. The generated code for GeometryService and related protos has been updated, and client code should be adjusted accordingly.

- Deprecated _v0 proto fields in intrinsic/geometry/proto/geometry_service.proto and intrinsic/geometry/proto/geometry_service_types.proto. For most GeometryService clients, changing the type of proto messages involved should be enough. If a GeometryService client has changed client code to _v0 suffixed fields, the client should switch to the newly introduced v1 proto fields without_v0 suffix. The _v0 fields will be removed in an upcoming release.

OrientedBoundingBox3

- The OrientedBoundingBox3 skill parameter will now be visualized as 3D boxes in the scene editor.

Version 1.24 (Oct 6th, 2025)

Control & Motion

- CiA/DS402 devices connected via EtherCAT can now be homed (define the 0 position) through Flowstate using the homing skill. As a prerequisite, the EtherCAT hardware module needs to be configured to expose the homing interfaces, and the real-time control framework (ICON) needs to be configured to interface with them.

- When controlling multiple robots with distinct robot control service instances, Flowstate would in some cases only update the belief world position of one of the robots. Now all robots are tracked correctly in the belief world.

Documentation

- You can now preview our AI-powered documentation search which is still under development. With this feature, you may extract better explanations and answers from Intrinsic documentation, especially when the complete answer may be in multiple sources. You'll find it under the "?" menu (top-right of the Flowstate Solution Editor) by selecting Search documentation (preview).

- A complete reference for protos and gRPC APIs is now available in the SDK. You can view it here. The gPRC and proto reference serves as a comprehensive list of the interfaces that are used in the Intrinsic SDK. You can use this reference to get an understanding of the functionality available through our platform API.

Infrastructure

- Text logs are now part of Intrinsic recordings and replays when the workcell is in “operate” mode.

Process Development and Execution

- You can now import and export multiple processes at once in Flowstate using the new Import and Export options in the Process menu. This enables moving multiple processes from one solution to another much more efficiently than before.

-

The DRAFT execution mode option has been renamed to PREVIEW throughout APIs, the solution building library and Flowstate. This now aligns with terminology on skill level, as the functionality is based on the

previewinterface of skills.For API use: Please update all occurence of

execution.Executive.SimulationMode.DRAFTwithexecution.Executive.SimulationMode.PREVIEW.

SDK & Dev Environment

inctl data {list, list_released, uninstall},inctl service {list, list_released, list_released_versions, uninstall}, andinctl skill {list_released, list_released_versions, uninstall}have been removed. Use the equivalentinctl asset ...command instead.

- Support for generic protos (e.g., skill returns) in loop nodes using for-each generators are deprecated. Please note that for-each generators have already been deprecated before. We recommend using while loops instead. For guidance, refer to the following examples: Data and For-Each example Dataflow example.

- Tooling has been added to the SDK for defining, installing and releasing both hardware devices (as long as they reference existing scene objects in the asset catalog) and data assets. The tooling for hardware devices is the

intrinsic_hardware_devicebazel rule, theinctl asset installfor installing a hardware device into a solution, and theinctl asset releasecommand for releasing it to the asset catalog. The tooling for data assets is theintrinsic_databazel rule, theinctl asset installfor installing a data asset into a solution, and theinctl asset releasecommand for releasing it to the asset catalog.

Workcell Design and Simulation

- Removed the previous restriction requiring the lower joint limit of new pinch gripper hardware devices to be 0. You can now define any finite lower and upper joint limits, as long as the upper limit is strictly greater than the lower limit. This change provides greater flexibility in configuring pinch gripper kinematics to better match your CAD models. Please view our documentation for gripper creation.

- Fixed an issue where a solution would crash when the gripper config was empty.

- Fixed an issue where sim, combined, and belief views in the Execute tab did not show updated robot and object motions (e.g. after jogging a robot) until a process was run or "Reset to Initial" was clicked.

Version 1.23 (Sep 1, 2025)

Account & Administration

- Flowstate now supports solution versioning, making it easier to iterate on your work without having to continuously save different copies of your solution. With versioning, you can commit snapshots of your Solution to build a complete version history over time. If needed, you can restore any previous version directly from the Solution Editor or Portal for quick recovery and uninterrupted development. All versions are permanently saved and remain unchanged once created. Learn more.

Control & Motion

- The real-time control service now allows certain hardware components to remain active even when operational hardware (e.g., robots) is disabled or faulted. This enables independent control tasks—such as handling door access requests or setting digital outputs over a fieldbus—to continue running. The disable_realtime_control and enable_realtime_control skills can selectively affect operational hardware, allowing more flexible control behavior across the workcell. With this feature, you can wait for access requests, cleanly pause robot streaming control and implement a pause in a process. Learn more.

- The skills “Enable motion” and “Disable motion” have been renamed to “Enable realtime control” and “Disable realtime control” to better reflect that they only affect the real-time control service and its connected hardware—not all motion. Existing solutions are unaffected, as the previous skill versions are preserved. New solutions should use the updated skill names.

- You can now use Flowstate while EtherCAT and GPIODialogs are open. This enables you to observe GPIOs while parameterizing skills, or configuring ICON.

- Jogging a robot in the Initial view now behaves as expected—positions no longer reset after movement. Additionally, joint jogging correctly updates the intended joints.

- Fixed an issue where the motion planning inspector could not open logging IDs if the org name had underscores.

- Fixed an issue that caused the debug tool "run_plan_trajectory_offline" to fail with errors.

- Fixed an issue where ENI upload was not working when using Flowstate.

- Fixed an issue where small motions of type relative_cartesian_pose resulted in no motion.

Infrastructure

- IntrinsicOS now supports network configuration via a local web interface. You can configure network interfaces, NTP server, Proxy server and a log server. The configuration can be changed pre-registration of an IPC as well as after the IPC is registered in Flowstate. Refer to our documentation on how to access the configuration pages. If you install IntrinsicOS on a new IPC, these features will be only available after you update the IPC to the latest version. This will be changed with the next release.

- You can now configure a log server (e.g., a SIEM system) to receive system logs from IntrinsicOS. This feature provides a secure and reliable way to forward crucial system data to a centralized analysis platform. Logs sent include system events such as SSH connections and system reboots, which are essential for complying with audit-logging and security analysis requirements. Refer to our documentation on how to locally access the configuration pages.

- The logger now allows setting custom retention per data streams enabling certain data streams like extended status messages to be retained for up to 7 days on-premise. Learn more.

Perception

- To use perception-related skills and UI elements in Flowstate (e.g. training, calibration wizard, scene alignment), you must now install a perception service via the “Add service” button in the Services panel. This enables you to select specific versions of pose estimation and calibration algorithms, ensuring compatibility and allowing quicker testing of fixes by installing updated versions. It's recommended to install only one instance of the perception service (named “perception”). UI components that require perception will automatically connect to an installed perception service instance, but if multiple are installed, one will be selected at random. To avoid this, keep only one instance by deleting extras in the UI. If no instance is installed, you’ll see a prompt to add one. Make sure all perception skills are updated to the latest version. If you're generating behavior trees via scripts, ensure the perception parameter points to the correct installed service.

Process Development

- Skills that fail as a result of an unmet precondition now show as “Failed” in the sequence list instead of “Selected”.

Solution Editor

- Previously, catalog assets that were installed but had no instances in the solution were not saved or shown anywhere in the solution. As a result, they were not reinstalled after saving and redeploying the solution.

Version 1.22 (Aug 11th, 2025)

Account & Administration

- Each organization can now assign the admin role to one or more members through the “people” tab in Account Settings (accessed via the person icon in the top-right corner of Flowstate portal). Admins have the ability to self-manage seats within their organization, including appointing or removing other admins, inviting users, resending or canceling invitations, and removing users. If you’d like to be added as an admin, please contact your Intrinsic point of contact.

Control & Motion

- If you manually modified a hardware module config and added SimBusModuleConfig, note that while most deprecated fields require no action,

resource_ids_for_devicesmust be replaced withnew_sim_api_config(geometry_asset_name: 'my_resource'), but only ifmy_resourcediffers from the name of the associated resource.

- You can now design and deploy solutions using the FANUC M20id/25 robot, available in the asset catalog in Flowstate.

- This new software extension enables you to configure EtherCAT topology (ENI), add devices via device description (ESI) and scan the EtherCAT bus for configuration or debugging reasons.

move_gripper_joints skill now handles joint limits

- The

move_gripper_jointsskill now checks for the initial joint position against limits before executing the movement. If the initial position is out of the limits, it raises an error. If the position is within the tolerance of the limits, the initial position is clipped to the limits.

- Jerk-limited motions are now up to 10% faster on average.

- Fixed an issue where running a cached motion plan after changes to the robot’s application limits could result in motions that exceed the newly configured limits. For example, if the “Move robot” skill was used, followed by a reduction in joint velocity limits, and then the skill was run again, the motion could still reflect the previous (higher) limits—potentially exceeding the new constraints. To ensure correct behavior, please update your solutions to the latest platform version. Refer to our instructions on how to update an IPC.

- Fixed an issue where in some instances the I/O panel was not appearing in the settings tab

Perception

- Create one-shot pose estimation models in just minutes. Unlike traditional AI systems, this model can be prompted with any object’s CAD model to instantly detect and estimate its pose within a scene—no additional training required. It adapts seamlessly to new objects, environments, and lighting conditions. While this feature is available in Flowstate, access is currently limited. If you're interested in exploring one-shot pose estimation, please contact your Intrinsic point of contact for more information.

- This new API enables operators to monitor camera health during production. HMI builders can use it to inform operators on past faults and let them clear existing faults directly from the camera.

- A new capture button has been added to Flowstate during Intrinsic calibration so you can now manually trigger frame acquisitions. This can be used to acquire a select set of frames for the calibration.

Process Development

- The sequence list for executed processes now includes expanded visibility into sub-processes when their entries are expanded. This enhancement is especially useful for nested processes that include "estimate_pose" skills, as their image results are now visible directly within the sequence list. Additionally, entries now display node names instead of skill IDs for improved readability.

SDK & Dev Environment

- Upgrade Protobuf to 30.1 and gRPC to 1.72 to resolve a build failure related to system_python in workspaces initialized with inctl bazel init. To ensure compatibility in existing workspaces, follow the update instructions to update your MODULE.bazel file.

- To fix warnings when building sdk-examples, please use ParseFromString instead of ParseFromCord

- You can now create services in Python to write digital outputs and read digital inputs from I/O modules on your system. This enables custom skills and services to control hardware devices like light stacks, grippers, tool changers, conveyors and more to be authored in Python and not be constrained to C++ as a development language.

Solution Editor

- Service states are now visible in the Service Manager, giving you clearer insight into the status of each service instance. Error reporting has been improved, and you can now view detailed error messages—including those from the underlying Kubernetes container—directly in the Service Manager dialog. You can also restart service instances through the Flowstate, making troubleshooting and recovery easier.

Workcell Design & Simulation

ObjectWorldClient's reparent_object API supports parenting an object to a frame

- This update adds new functionality to an existing API in the SDK. Unit tests have also been included to confirm that parenting a frame or an object to a frame under another object works as expected.

- Changes to application limits in Flowstate are now automatically kept in sync between the initial and belief worlds. Previously, these limits could become out of sync, leading to inconsistencies between the two.

- Fixed an issue where the relative transforms in Flowstate didn’t match the Python API. Now, the relative frames in Flowstate are calculated using quaternions directly.

- Fixed an issue where child objects were being incorrectly reparented.

- Fixed an issue where large meshes did not apply the scale directly on ingestion and caused timeout later in the pipeline.

Version 1.21 (July 14th, 2025)

Control & Motion

- The

AIO Read Inputskill can be used to read analog signals exposed by real-time controller adio parts and returned from the skill. TheAIO Set Outputskill can be used to set specific analog outputs analog signals exposed by real-time controller adio parts.

- To improve debugging, the FANUC hardware module driver now provides a clear, actionable error message when single step mode is enabled. Previously, it only displayed a generic “Could not start program” message.

apply_force_actions skill now has sensed torques as state variables for ICON conditions

- Sensed torque around the axis where torque is applied can now be used as a condition to transition between actions in the apply_ force_actions skill using ICON.

move_robot skill has new geometric constraint for relative motions

- move_robot skill has new geometric constraint for relative motions. This update makes it easier to define relative movements and enables motion with respect to fixed frames that are not attached to the robot.

- If you have a mix of devices on your EtherCAT bus (e.g., drives and DIOs), and your drives are configured to "disable" on a safety signal, the EtherCAT hardware module driver can now be set up to avoid faulting. This allows DIOs to remain operational while the drives are safely stopped.

disable_motion and enable_motion skills can be used to request a pause in real time control

- The disable_motion and enable_motion skills have been reinstated. You can now use them to disable/enable real time control by shutting down and starting streaming connections to robot hardware. This can help you better control the robot cell and door. The behavior of ClearFaults (in the UI and HMIs) remains the same: real-time control is re-enabled if the fault is successfully cleared.

- To help with grasping circular objects, the annotate_grasp skill and service now supports 3-finger grippers and other centric grippers. The centric gripper can be selected as an option in the

annotate_graspskill, and its parameters, such as minimum closed radius, maximum open radius, and finger length, can be set using the analog input.

- For automatic grasp annotation, you can now specify grasp constraints to prevent annotating grasp frames in a certain region of interest. This can be specified using the

annotate_graspskill.

- You can now recover fatal faults directly from the robot control panel, without needing to manually clear them through the service manager for each hardware driver module.

- Jogging a robot in “Initial” view now provides smoother visual motions and supports undo/redo actions.

- Service manager and EtherCAT dialogs no longer block the use of Flowstate. For instance, the service state can be observed while interacting with the solution.

Infrastructure

- If you encounter the error message “Not supported on real hardware: try starting another solution, a blank solution, or use simulation”, please update your cluster (IPC) to the latest software version. For instructions on updating your IPC, refer to this documentation.

Perception

capture_images skill is now available

- A new capture_images skill is now available in the catalog. This skill allows you to capture sensor images and pass them to other skills, enabling image reuse and dynamic camera setting adjustments at runtime.

- You can now manually select waypoints during the camera-to-robot calibration process, offering an alternative to the existing automatic waypoint sampling method. This provides greater control in situations where automatic sampling may lead to collisions or uncontrolled paths of the robot.

Process Development

- A new execution mode, Fast Preview, is now available for running processes. This mode is designed to rapidly execute the

preview()implementations of each skill, providing quick insight into what a process will do and whether it is correctly configured. Unlike the existing Draft Sim mode, Fast Preview skips visualization output and runs the process as quickly as possible. In the Flowstate Editor, Fast Preview can be selected in the Execution Settings section of the properties panel on the left side. At the API level, enable Fast Preview by setting thesimulation_modeparameter when starting an operation with the executive service.

- Proto editor support has been expanded to include all asset types, with added flexibility through code-based editing. This improvement allows asset configurations such as skill parameters and service settings to be modified using either a graphical interface or direct text proto editing.

- Reusable processes can now be uninstalled from the solution through the Uninstall button in the properties side panel. This removes them from the list of installed processes that is shown in the Add Process menu. Skills can also be uninstalled by right-clicking them in the installed assets panel and selecting “Uninstall Asset”.

ExtendedStatus

- Fallback nodes in processes can now be configured to handle specific failure types by targeting specific failures using ExtendedStatus codes. In addition, their visual representation has been enhanced to more clearly show how fallbacks are selected and executed. For further details, please see the documentation.

SDK & Dev Environment

State to SelfState

- If you use the SDK to read or write a service state (for example, you developed a custom intrinsic_service or hardware driver that exposes its state or an HMI that displays a service state), you need to rename

StatetoSelfStateif you see any compiler errors. (see proto)

- inctl doctor is a command-line interface tool for automated diagnostic checks of your development environment and configurations. Please refer to our documentation for more information.

Executive::StartOperation call now accepts a scene_id parameter that can be set to an initial world id

- If this parameter is set to a valid world id, the executive belief world will be reset from this 'scene' world id before starting the process. If the solution is deployed in simulation, the simulator will also be reset from this 'scene' world id. Please refer to our documentation for more information.

- A service's manifest now specifies the full name of its configuration proto in ServiceManifest.service_def.config_message_full_name. If no default configuration is specified, the default becomes an empty instance of this message (see proto).

- Added a C++ client library to access the GPIO service (see /intrinsic/hardware/gpio)

- INCTL and INBLD binaries are now also available outside of the Intrinsic development container. You can find them in the assets of the SDK release.

Workcell Design & Simulation

- Solutions with scene objects using the product spawner and product outfeed may no longer work. Please follow our documentation for how to update your solution(s) using new workflows.

ForceTorqueDevicePlugin in world/scene conversion has been deprecated

- This only applies to installing custom hardware devices with force torque sensors to Flowstate. The

<plugin filename="static://giza::simulation::ForceTorqueDevicePlugin">tag no longer needs to be specified in your device .sdf file and should be removed. If you need to specify sensor noise for simulation, please add a<force_torque>tag with the required noise characteristics as per the SDFormat spec.

- User data added to an object during import can now be viewed by selecting the object in the scene tree, clicking the Scene tab in the properties panel, and clicking the 'View object userdata' button.

create_at_frame feature now available within the create_object skill

- The

create_objectskill now supports acreate_at_frameparameter, allowing you to create objects relative to a specified frame. You can also optionally attach the created objects to that frame.

Version 1.20 (June 9th, 2025)

Control & Motion

- The new

jogging_service.protodefinition enables you to control robot jogging through a gRPC service. The service allows for both joint and Cartesian jogging, with support for specifying jogging frames and defining velocity scaling. It provides methods to start a jogging session, send jogging commands, and query available parts with their respective capabilities and limits. This service can be used to develop custom HMIs.

- For greater flexibility, the new recompute feature in the motion planning inspector lets you modify the input motion specifications of previously logged data and trigger a re-planning of the motion. Additionally, the trajectory playback slider allows you to scrub through the trajectory, updating the robot’s joint values in the displayed world view as you interact with the slider.

- Before, the digital inputs of FANUC robots were only reported when the robot was enabled. Now it is possible to inspect the current digital input values also when the robot is faulted, e.g. due to the emergency stop. This can help visualize input values at all times on an HMI or help during debugging.

- In the robot control panel you can now distinguish between configuration errors (missing realtime control service) and infrastructure errors (where Flowstate is unable to reach the backend to even check whether there is or isn't a realtime control service).

- The file size has increased from a 1MiB limit to a 10MiB limit.

hardware_module_without_geometry now functions properly

- Fixed an issue where ICON fails to connect to the simulated hardware driver module, leading to a broken simulation.

- Fixed an issue where joint angles were being incorrectly displayed using the “execute” view.

- Fixed an issue where Cartesian jogging was switching between world and TCP frames.

- Fixed an issue where the selected FANUC program could not be switched.

Documentation

- We've introduced a redesigned menu structure on our documentation site to better align with the typical journey of building, deploying, and operating solutions. This update introduces a more intuitive organization of content, making it quicker and easier to find what you need. You may notice some content has moved to different sections and certain pages have updated titles. If you’re having trouble locating anything in the new structure, please don’t hesitate to submit a support ticket.

Perception

- You can now create a pose estimator (which might include a training step) via an API call, e.g. from an HMI. Creation progress can then be monitored, and the resulting pose estimator saved to the solution and used in a skill.

- To simplify aligning the belief and real worlds, the scene alignment dialog now includes a snapping feature that automatically matches objects between the real environment and the digital twin. Previously, you could overlay a real-world image with the belief world and manually adjust objects to reduce the sim-to-real gap. With this new feature, you can use pose refinement to semi-automatically align objects, making the process faster, easier, and more accurate.

Process Development

- Improved the startup performance and state handling for process execution in simulation. When a process is played from the frontend, the wait time before executing the first skill is now reduced by up to 50%. A new PREPARING state has been introduced to reflect the transition phase between clicking play and starting execution. If an error occurs during this state, the error panel will display a detailed explanation. Additionally, processes can now be cancelled or suspended during the PREPARING state, just like in the RUNNING state.

SDK & Dev Environment

- Please create hardware modules using

intrinsic_serviceinstead and install them usinginctl asset install.

- Please use the asset panel in flowstate or inctl asset uninstall to uninstall a skill. Alternatively, you can update your SDK to the latest release. The hwmodule tool will no longer work.

clear_default has been removed

- Please use inctl asset

update_release_metadatainstead.

- inctl service add now requires an IPC running intrinsic.platform.20250113.RC01 or later and an SDK of intrinsic.platform.20250414.RC03 or later. Unimplemented errors may be seen otherwise.

ai.intrinsic.create_object skill

- We have changed how the

ai.intrinsic.create_objectskill retrieves information about the target object. Please update to a newer version of the skill if executing it returns an error message that says, "invalid view AssetViewType_ASSET_VIEW_TYPE_ALL".

//intrinsic/geometry/ have been consolidated into //intrinsic/geometry/proto

- Please import the generated files from

//intrinsic/geometry/proto.

- For processes with selector node, use the branches field instead. In the SBL call

bt.Selector(branches=[bt.Selector.Branch(condition=<selectionConditon>, node=<childNode>), …]). In the frontend load and save a behavior tree created before this change to migrate it.

get_released command

- A new inctl asset

get_releasedcommand has been added to fetch information about a released version of an asset from the catalog.

update_release_metadata

- A new inctl asset

update_release_metadatahas been added to update the release metadata (e.g., default and org_private flags) of a released version of an asset in the catalog.

Workcell Design & Simulation

- In order to upgrade, you should first uninstall any existing spawners and outfeeds in their solutions before adding the newest versions.

Any skills that may use these assets (namely

request_productandremove_product) will simply need to be updated. The existing spawners and outfeeds are now deprecated but will continue to work until the next major release.

remove_object skill is now available in the catalog

- In addition to the

create_objectskill introduced in our V1.19 release, we’re also launching a newremove_objectskill, which lets you remove objects from both the simulation and belief worlds. Together, these skills enable you to create and remove objects directly within your chosen world, making multi-world workflows more seamless and eliminating the need to manually create objects. Using both skills together, you can place objects precisely using a frame, region, or list of poses, or opt for random placement. When removing objects, you can target individual instances or clear all objects within a specific region.

get_object skill is now available in the catalog

- You can now use the

get_objectskill to get the reference of an object from the object name in a process. When working with objects, you may encounter situations where one skill outputs an object name as a String, while the next skill expects an ObjectReference. You can now use theget_objectskill to easily convert a String into anObjectReference, ensuring smoother skill chaining and reducing manual data handling.

create_object skill now includes the ability to spawn in sim world

- Previously, the

create_objectskill (ai.intrinsic.create_object) only created objects in the belief world. Now, you can use the newcreate_in_worldparameter to specify an object's creation location, choosing between belief, sim, or belief_and_sim. When you select sim or belief_and_sim, the skill will also create objects in the simulation world. This update makes it easier for you to simulate object creation directly from a scene object asset.

- When importing single-scene objects, you’ll now be prompted to confirm whether you want to create an instance of the imported object in the scene. Additionally, you can specify user data for imported scene objects, either as a text string or using supported protobuf formats. A new Check formatting button lets you validate the user data to ensure it matches recognized protobuf types, helping you maintain data accuracy during import.

- The pose UI in the object panel and skill parameters now share consistent behavior. Copy and paste buttons have been added to skill parameter pose fields, allowing you to seamlessly copy poses between the object panel and skills.

- This fix applies specifically to kinematic scene objects which are neither gripper (hardware) devices nor associated with a ICON hardware module.

Version 1.19 (May 12th, 2025)

Control & Motion

- The Motion Planning Inspector (MPI) helps you visualize historical robot motion planning data, rendering input parameters, output trajectories, and 3D environment snapshots. It highlights any planning errors, making it easier to debug and understand motion behavior.

- Enabling the hardware driver module now triggers an automatic update of the current configuration. Any incorrect configurations will be rejected. For guidance on adjusting the configuration using this new validation feature, please refer to Step 3 in the Fanuc stream motion setup guide.

- You can now design and deploy solutions using three new FANUC M-10iD/12 robot models available in the catalog. You can use our guide to set them up in Flowstate.

- Resolved an issue where sideloaded custom hardware module drivers would fail to initialize properly, ensuring they now start as expected.

- When ICON initialization fails, RobotStatus will now be published on PubSub topics. This ensures that consumers of these topics can accurately report initialization failures instead of experiencing timeouts. Consequently, the output of

GetOperationalStatus()and subscriptions to the robot status topic will now be consistent.

- Fixed joints will no longer be counted as joints within

WorldScene.

- To improve robustness, GazeboHwm will now initiate the shared-memory server regardless of configuration errors. This update ensures the server starts even when misconfigurations are present.

- The robot control panel now identifies and interacts with the nearest robot ancestor instead of the topmost one when a user clicks an element. This change ensures that the system controls the most relevant robot in the hierarchy.

- Previously, recovering from simulation errors required users to manually reset to the initial state. The

RealtimeClockwill now be automatically reset during thePrepare()function, eliminating the need for manual resets.

- The websocket jogging API now features improved part selection. The fallback to the 'arm' part has been removed. Consequently, either the designated robot part (gripper, arm, etc.) will move, or no movement will occur, and the frontend will display an error message.

- Added support for copy-pasting joint vectors in degrees, including prismatic joints in the robot control panel’s joint section.

HMI

Perception

- The 'resource_registry' positional argument is no longer used in the updated constructor. To avoid issues, do not include this argument when instantiating the class.

- Existing cameras must be deleted and then re-added or reconnected to a solution. To better reflect the real driver's functionality, the simulated photoneo now returns only intensity images and point clouds by default.

- Please use intrinsic/perception/v1/pose_estimator_id.proto instead.

SDK & Dev Environment

- Learn how to report your service state here.

- The SDK repository has been moved from https://github.com/intrinsic-dev to https://github.com/intrinsic-ai. This will not impact any existing solutions or software you’ve built with the SDK.

Workcell Design &Simulation

- Enable optional material override in the scene object import dialog. Selecting 'None' will preserve the settings contained in the imported file.

- Externalize scene_object_import.proto to the SDK. Use this service to import scene files into Intrinsic SceneObject.

spawn_primitive_skill is now available.

- This

spawn_primitive_skillcan spawn primitive geometry in the world. It can spawn either a cuboid or a cylinder. It takes a list of poses and spawns the requested primitive at all of these poses. Please see inline help information for this skill in Flowstate.

- Assets can now be uninstalled from the asset panel by right-clicking on an asset and selecting 'Uninstall asset' from the context menu.

- The skill can create a world object in the belief world for an installed SceneObject asset. Please see inline help information for this skill in Flowstate.

Version 1.18 (Apr 21st, 2025)

This software release includes several breaking changes that may impact solutions running in both simulation (VM) and on hardware. We strongly recommend reviewing the V1.18 Software Release Update Guide to understand the necessary actions if you encounter any issues.

Control & Motion

- When using the Move-robot skill to preview motion segments, the visualization now incorporates path constraints and collision settings, offering a more accurate representation of the robot's motion. If a collision occurs, the object in collision will be highlighted, making it easier to identify problematic areas, especially in scenes with many objects.

- The Apply-force-actions skill now supports force-controlled pushing with a small oscillation, ideal for tasks like inserting connectors into tight housings. Additionally, it can now be configured to be compliant only in the motion direction while remaining stiff in all other directions.

- Fixed an issue in the robot posing panel where only the last updated joint value would take effect when adjusting multiple joints. Now, all specified joint values are correctly applied.

Infrastructure

- Software release V1.18 is not backwards compatible with previous versions of software running on your Intrinsic-configured PC (IPC). If you have disabled automatic updates for your IPCs, you will need to manually update the IPC to continue.

- To make it easier to understand the sequence of actions and how they relate to each other, the logs for different skills are now shown in chronological order, based on their timestamps, in the text logs viewer.

- To quickly access the text logs viewer, you can now go to the “Window” drop down menu and select “Open Text Logs Viewer.”

Perception

- You can now specify post-modification pose filters in the “Modify-pose” skill, allowing filters to be applied after the modifiers. Previously, filters were applied before the modifiers, which in some cases led to them being ignored. With this update, applying the filter after the modifier ensures it is no longer overlooked.

Process Development

- The process editor has a minimap to make navigation in large processes easier, as you can now pan the minimap canvas to quickly jump to another position in the main process window.

- When selecting multiple nodes in your Flowstate process, it's now easier to see whether they are connected or not. Disconnected nodes are highlighted with a dashed border, while connected nodes have a solid border. Additionally, the total number of selected nodes will now be displayed.

SDK & Dev Environment

- To avoid any issues using our SDK, please update to Bazel 8.1.1 by following these instructions.

- The following elements have been deprecated and will be removed in future releases:

- The behavior_tree_state will no longer be supported. Please use operation_state to identify the canonical state of an operation moving forward

- The

cel_expressionoption in the data node has been deprecated. Replace theblackboard_keywith thecel_expressionin all places that use theblackboard_key. E.g., for a data node with blackboard key 'foo' and cel_expression 'skill_return.bar', when 'foo' is used in any assignment, replace that assignment by 'skill_return.bar'. - The

from_worldoption in the data node has been deprecated. Replace this, e.g., by a task node with Python code execution. - Data nodes with the

protosfield and for each loops have been deprecated. Please use a while loop instead. - Executive clients must not assume that there is always just a single operation any more to properly implement the API.

use_default_planhas been deprecated when creating a new executive operation. You should now specify a behavior tree explicitly.- The

instance_namefield in a BehaviorCall has been deprecated and should no longer be set. - The

skill_execution_datafield in the BehaviorCall has been deprecated and should no longer be read or set. - The behavior_tree_state field in the executive RunMetadata has been replaced by operation_state. Use this instead for the canonical state of an operation and handle the additional state PREPARING appropriately. PREPARING is active when an execution has been requested, but the tree has not started, yet, waiting, e.g., for a simulation reset.

Solution Editor

- The top bar menu in the Flowstate now includes a persistence state indicator. This provides feedback on your solution’s save status—showing when all components are saved, and if not, specifying which elements (e.g., process, scene, pose estimators) remain unsaved.

Workcell Design and Simulation

- You can now easily track your actions in the process and scene editors with the new "history panel" in Flowstate. Located on the left side, beneath the properties panel, the history panel displays a list of process and scene edits currently in the undo/redo queue, with the most recent edit highlighted. Actions that can’t be undone will appear greyed out and will be skipped during undo and redo.

- The following visual indicators make it easier to identify changes in the scene tree and clarify which actions can be taken:

- Delta symbol: modifications to instances in the Execute world, whether by process, user, or service, that differ from the Initial world will be marked with a "Delta" icon in the scene tree.

- Plus symbol: instances added directly to the "Belief" world, such as frames or product instances, will be highlighted with a "+" symbol, indicating they are missing from the Initial world.

- Globe symbol: frames with the globe symbol can be renamed, reparented, and deleted. These global frames are not tied to a specific type. Frames tied to a type can only have their pose adjusted.

- To simplify toggling visibility, hiding or unhiding an object in the scene tree will now hide or unhide both the parent object and all its child objects. You can also toggle visibility for individual child objects to control each instance separately.

- To save time iterating on your workcell, toggling the visibility or locking an object in either the Initial or Belief worlds will now automatically update the other. Visibility settings are retained when executing a process.

- Flowstate now automatically computes inertial parameters for objects that don’t have user-specified values. Previously, objects without specified parameters defaulted to 1kg mass and 1kg-m² inertia, which caused unrealistic behavior in simulations, especially for small models. Now, inertial parameters are calculated based on the provided collision geometry. If a non-default mass or density is specified for the mesh, that value will be used for the computation.

- You can now save and load your camera view in Flowstate. To save the current view, simply go to the ‘View’ menu and select “Save Camera View.” To load it, click “Load Camera View.” The saved view persists even after a browser refresh, making it easier to focus on specific areas, like the robot or a particular viewpoint, while observing the process.

- You can now start or save a solution even if some "world updates" are missing or invalid. This allows you to continue working without getting blocked, and you can still reach out to support with error messages if needed.